This is the multi-page printable view of this section. Click here to print.

Customize Bare Metal

- 1: Boot modes for EKS Anywhere on Bare Metal

- 2: Customize HookOS for EKS Anywhere on Bare Metal

- 3: DHCP options for EKS Anywhere

1 - Boot modes for EKS Anywhere on Bare Metal

In order to install an Operating System on a machine, the machine needs to boot into the EKS Anywhere Operating System Installation Environment (HookOS). This is accomplished in one of two ways. The first is via the network, also known as PXE boot. This is the default mode. The second is via a virtual CD/DVD device. In EKS Anywhere this is also known as ISO boot.

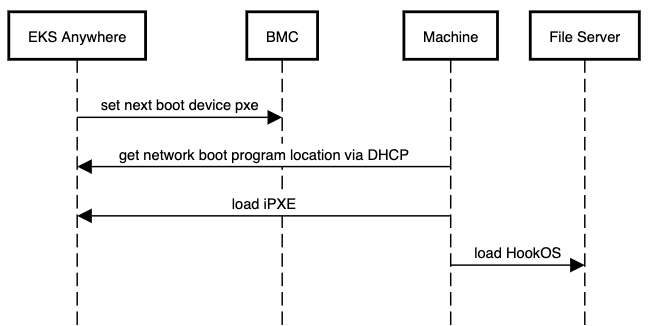

Network Boot

This is the default boot method used for machines in EKS Anywhere. This method requires the EKS Anywhere Bootstrap and Management Clusters to have layer 2 network access to the machines being booted. The network boot process hands out the initial bootloader location via DHCP. In EKS Anywhere this is the iPXE bootloader. The iPXE bootloader then downloads the EKS Anywhere OSIE image and boots into it. The following is a simplified sequence diagram of the network boot process:

Cluster Spec Configuration - Network Boot

As this is the default method, no additional configuration is required. Follow the installation instructions as normal.

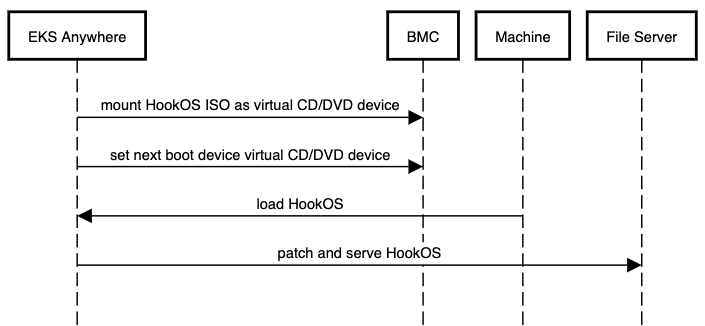

ISO Boot

This method does not require the EKS Anywhere Bootstrap and Management Clusters to have layer 2 network access to the machines being booted. It does not use DHCP or require layer 2 network access. Instead, the EKS Anywhere HookOS image is provided as a CD/DVD ISO file by the Tinkerbell stack. The HookOS ISO is then attached to the machine via the BMC as a virtual CD/DVD device. The machine is then booted into the virtual CD/DVD device and HookOS boots up. The following is a simplified sequence diagram of the ISO boot process:

Cluster Spec Configuration - ISO Boot

To enable the ISO boot method there is one required field and one optional field.

- Required:

TinkerbellDatacenterConfig.spec.isoBoot- Set this field totrueto enable the ISO boot mode. - Optional:

TinkerbellDatacenterConfig.spec.isoURL- This field is a string value that specifies the URL to the HookOS ISO file. If this field is not provided, the default HookOS ISO file will be used.

Important

In order to use the ISO boot mode all of the following must be true:

- The BMC info for all machines must be provided in the hardware.csv file.

- All BMCs must have virtual media mounting capabilities with remote HTTP(S) support.

- All BMCs must have Redfish enabled and Redfish must have virtual media mounting capabilities.

spec:

isoBoot: true

hookIsoURL: "http://example.com/hookos.iso"

It is highly recommended that the HookOS ISO file is available locally in your environment and its location is specified in the hookIsoURL field. The default location for the HookOS ISO file is hosted in the cloud by AWS. This means that the Tinkerbell stack, and more specifically the Smee service, will need internet access to this location and Smee will need to pull from this location every time a machine is booted. This can lead to slow boot times and potential boot failures if the internet connection is slow, constrained, or lost.

Run the following commmand to download the HookOS ISO file:

BUNDLE_URL=$(eksctl anywhere version | grep "https://anywhere-assets.eks.amazonaws.com/releases/bundles" | tr -d ' ' | cut -d":" -f2,3)

IMAGE=$(curl -SsL $BUNDLE_URL | grep -E 'uri: .*hook-x86_64-efi-initrd.iso' | uniq | tr -d ' ' | cut -d":" -f2,3)

wget $IMAGE

Make this file available via a web server and put the full URL where this ISO is downloadable in the hookIsoURL field.

2 - Customize HookOS for EKS Anywhere on Bare Metal

To network boot bare metal machines in EKS Anywhere clusters, machines acquire a kernel and initial ramdisk that is referred to as HookOS. A default HookOS is provided when you create an EKS Anywhere cluster. However, there may be cases where you want and/or need to customize the default HookOS, such as to add drivers required to boot your particular type of hardware.

The following procedure describes how to customize and build HookOS. For more information on Tinkerbell’s HookOS Installation Environment, see the Tinkerbell Hook repo .

System requirements

>= 2G memory>= 4 CPU cores# the more cores the better for kernel building.>= 20G disk space

Dependencies

Be sure to install all the following dependencies.

jqenvsubstpigzdockercurlbash>= 4.4gitfindutils

-

Clone the Hook repo or your fork of that repo:

git clone https://github.com/tinkerbell/hook.git cd hook/ -

Run the Linux kernel menuconfig TUI and configure the kernel as needed. Save the config before you exit. The result of this step will be a modified kernel configuration file (

./kernel/configs/generic-6.6.y-x86_64)../build.sh kernel-config hook-latest-lts-amd64 -

Build the kernel container image. The result of this step will be a container image. Use

docker images quay.io/tinkerbell/hook-kernelto see it../build.sh kernel hook-latest-lts-amd64 -

Add the embedded Action images. This creates the file,

images.txt, in theimages/hook-embeddeddirectory and runs the script,images/hook-embedded/pull-images.sh, to pull and embed the images in the HookOS initramfs. The result of this step will be a populated images file:images/hook-embedded/images.txtand a Docker directory cache of images:images/hook-embedded/images/.BUNDLE_URL=$(eksctl anywhere version | grep "https://anywhere-assets.eks.amazonaws.com/releases/bundles" | tr -d ' ' | cut -d":" -f2,3) IMAGES=$(curl -s $BUNDLE_URL | grep "public.ecr.aws/eks-anywhere/tinkerbell/actions/\|public.ecr.aws/eks-anywhere/tinkerbell/tink/tink-worker" | sort | uniq | tr -d ' ' | cut -d":" -f2,3) images_file="images/hook-embedded/images.txt" rm "$images_file" while read -r image; do action_name=$(basename "$image" | cut -d":" -f1) echo "$image 127.0.0.1/embedded/$action_name" >> "$images_file" done <<< "$IMAGES" (cd images/hook-embedded; ./pull-images.sh) -

Build the HookOS kernel and initramfs artifacts. The

sudocommand is needed as the image embedding step uses Docker-in-Docker (DinD) which changes file ownerships to the root user. The result of this step will be the kernel and initramfs. These files are located at./out/hook/vmlinuz-latest-lts-x86_64and./out/hook/initramfs-latest-lts-x86_64respectively.sudo ./build.sh linuxkit hook-latest-lts-amd64Note: If you did not customize the kernel configuration, you can use the latest upstream built kernel by setting the

USE_LATEST_BUILT_KERNELtoyes. Run this command instead of the previous one.sudo ./build.sh linuxkit hook-latest-lts-amd64 USE_LATEST_BUILT_KERNEL=yes -

Rename the kernel and initramfs files to

vmlinuz-x86_64andinitramfs-x86_64respectively.mv ./out/hook/vmlinuz-latest-lts-x86_64 ./out/hook/vmlinuz-x86_64 mv ./out/hook/initramfs-latest-lts-x86_64 ./out/hook/initramfs-x86_64 -

To use the kernel (

vmlinuz-x86_64) and initial ram disk (initramfs-x86_64) when you build your EKS Anywhere cluster, see the description of thehookImagesURLPathfield in your cluster configuration file.

3 - DHCP options for EKS Anywhere

In order to facilitate network booting machines, EKS Anywhere bare metal runs its own DHCP server, Boots (a standalone service in the Tinkerbell stack). There can be numerous reasons why you may want to use an existing DHCP service instead of Boots: Security, compliance, access issues, existing layer 2 constraints, existing automation, and so on.

In environments where there is an existing DHCP service, this DHCP service can be configured to interoperate with EKS Anywhere. This document will cover how to make your existing DHCP service interoperate with EKS Anywhere bare metal. In this scenario EKS Anywhere will have no layer 2 DHCP responsibilities.

Note: Currently, Boots is responsible for more than just DHCP. So Boots can’t be entirely avoided in the provisioning process.

Additional Services in Boots

- HTTP and TFTP servers for iPXE binaries

- HTTP server for iPXE script

- Syslog server (receiver)

Process

As a prerequisite, your existing DHCP must serve host/address/static reservations

for all machines that EKS Anywhere bare metal will be provisioning. This means that the IPAM details you enter into your hardware.csv

must be used to create host/address/static reservations in your existing DHCP service.

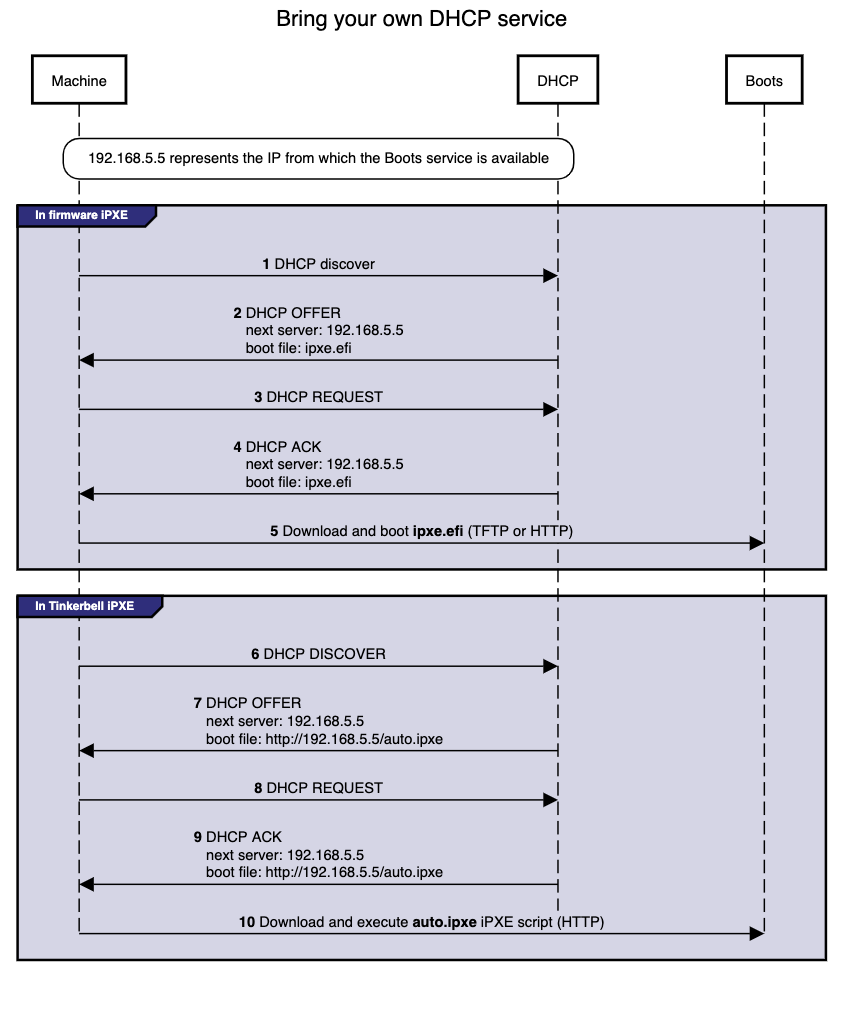

Now, you configure your existing DHCP service to provide the location of the iPXE binary and script. This is a two-step interaction between machines and the DHCP service and enables the provisioning process to start.

-

Step 1: The machine broadcasts a request to network boot. Your existing DHCP service then provides the machine with all IPAM info as well as the location of the Tinkerbell iPXE binary (

ipxe.efi). The machine configures its network interface with the IPAM info then downloads the Tinkerbell iPXE binary from the location provided by the DHCP service and runs it. -

Step 2: Now with the Tinkerbell iPXE binary loaded and running, iPXE again broadcasts a request to network boot. The DHCP service again provides all IPAM info as well as the location of the Tinkerbell iPXE script (

auto.ipxe). iPXE configures its network interface using the IPAM info and then downloads the Tinkerbell iPXE script from the location provided by the DHCP service and runs it.

Note The

auto.ipxeis an iPXE script that tells iPXE from where to download the HookOS kernel and initrd so that they can be loaded into memory.

The following diagram illustrates the process described above. Note that the diagram only describes the network booting parts of the DHCP interaction, not the exchange of IPAM info.

Configuration

Below you will find code snippets showing how to add the two-step process from above to an existing DHCP service. Each config checks if DHCP option 77 (user class option

) equals “Tinkerbell”. If it does match, then the Tinkerbell iPXE script (auto.ipxe) will be served. If option 77 does not match, then the iPXE binary (ipxe.efi) will be served.

DHCP option: next server

Most DHCP services define a next server option. This option generally corresponds to either DHCP option 66 or the DHCP header sname, reference.

This option is used to tell a machine where to download the initial bootloader, reference.

Special consideration is required for the next server value when using EKS Anywhere to create your initial management cluster. This is because during this initial create phase a temporary bootstrap cluster is created and used to provision the management cluster.

The bootstrap cluster runs the Tinkerbell stack. When the management cluster is successfully created, the Tinkerbell stack is redeployed to the management cluster and the bootstrap cluster is deleted. This means that the IP address of the Tinkerbell stack will change.

As a temporary and one-time step, the IP address used by the existing DHCP service for next server will need to be the IP address of the temporary bootstrap cluster. This will be the IP of the Admin node or if you use the cli flag --tinkerbell-bootstrap-ip then that IP should be used for the next server in your existing DHCP service.

Once the management cluster is created, the IP address used by the existing DHCP service for next server will need to be updated to the tinkerbellIP. This IP is defined in your cluster spec at tinkerbellDatacenterConfig.spec.tinkerbellIP. The next server IP will not need to be updated again.

Note: The upgrade phase of a management cluster or the creation of any workload clusters will not require you to change the

next serverIP in the config of your existing DHCP service.

Code snippets

The following code snippets are generic examples of the config needed to enable the two-step process to an existing DHCP service. It does not cover the IPAM info that is also required.

dnsmasq.conf

dhcp-match=tinkerbell, option:user-class, Tinkerbell

dhcp-boot=tag:!tinkerbell,ipxe.efi,none,192.168.2.112

dhcp-boot=tag:tinkerbell,http://192.168.2.112/auto.ipxe

kea.json

{

"Dhcp4": {

"client-classes": [

{

"name": "tinkerbell",

"test": "substring(option[77].hex,0,10) == 'Tinkerbell'",

"boot-file-name": "http://192.168.2.112/auto.ipxe"

},

{

"name": "default",

"test": "not(substring(option[77].hex,0,10) == 'Tinkerbell')",

"boot-file-name": "ipxe.efi"

}

],

"subnet4": [

{

"next-server": "192.168.2.112"

}

]

}

}

dhcpd.conf

if exists user-class and option user-class = "Tinkerbell" {

filename "http://192.168.2.112/auto.ipxe";

} else {

filename "ipxe.efi";

}

next-server "192.168.1.112";

Please follow the ipxe.org guide on how to configure Microsoft DHCP server.