Most of the content in the EKS Anywhere documentation is specific to how EKS Anywhere deploys and manages Kubernetes clusters. For information on Kubernetes itself, reference the Kubernetes documentation.

This is the multi-page printable view of this section. Click here to print.

Concepts

- 1: EKS Anywhere Architecture

- 2: EKS Anywhere and Kubernetes version lifecycle

- 3: Support for EKS Anywhere

- 4: EKS Anywhere Curated Packages

- 5: Compare EKS Anywhere and Amazon EKS

- 6:

1 - EKS Anywhere Architecture

EKS Anywhere supports many different types of infrastructure including VMWare vSphere, bare metal, Nutanix, Apache CloudStack, and AWS Snow. EKS Anywhere is built on the Kubernetes sub-project called Cluster API (CAPI), which is focused on providing declarative APIs and tooling to simplify the provisioning, upgrading, and operating of multiple Kubernetes clusters. EKS Anywhere inherits many of the same architectural patterns and concepts that exist in CAPI. Reference the CAPI documentation to learn more about the core CAPI concepts.

Components

Each EKS Anywhere version includes all components required to create and manage EKS Anywhere clusters.

Administrative / CLI components

Responsible for lifecycle operations of management or standalone clusters, building images, and collecting support diagnostics. Admin / CLI components run on Admin machines or image building machines.

| Component | Description |

|---|---|

| eksctl CLI | Command-line tool to create, upgrade, and delete management, standalone, and optionally workload clusters. |

| image-builder | Command-line tool to build Ubuntu and RHEL node images |

| diagnostics collector | Command-line tool to produce support diagnostics bundle |

Management components

Responsible for infrastructure and cluster lifecycle management (create, update, upgrade, scale, delete). Management components run on standalone or management clusters.

| Component | Description |

|---|---|

| CAPI controller | Controller that manages core Cluster API objects such as Cluster, Machine, MachineHealthCheck etc. |

| EKS Anywhere lifecycle controller | Controller that manages EKS Anywhere objects such as EKS Anywhere Clusters, EKS-A Releases, FluxConfig, GitOpsConfig, AwsIamConfig, OidcConfig |

| Curated Packages controller | Controller that manages EKS Anywhere Curated Package objects |

| Kubeadm controller | Controller that manages Kubernetes control plane objects |

| Etcdadm controller | Controller that manages etcd objects |

| Provider-specific controllers | Controller that interacts with infrastructure provider (vSphere, bare metal etc.) and manages the infrastructure objects |

| EKS Anywhere CRDs | Custom Resource Definitions that EKS Anywhere uses to define and control infrastructure, machines, clusters, and other objects |

Cluster components

Components that make up a Kubernetes cluster where applications run. Cluster components run on standalone, management, and workload clusters.

| Component | Description |

|---|---|

| Kubernetes | Kubernetes components that include kube-apiserver, kube-controller-manager, kube-scheduler, kubelet, kubectl |

| etcd | Etcd database used for Kubernetes control plane datastore |

| Cilium | Container Networking Interface (CNI) |

| CoreDNS | In-cluster DNS |

| kube-proxy | Network proxy that runs on each node |

| containerd | Container runtime |

| kube-vip | Load balancer that runs on control plane to balance control plane IPs |

Deployment Architectures

EKS Anywhere supports two deployment architectures:

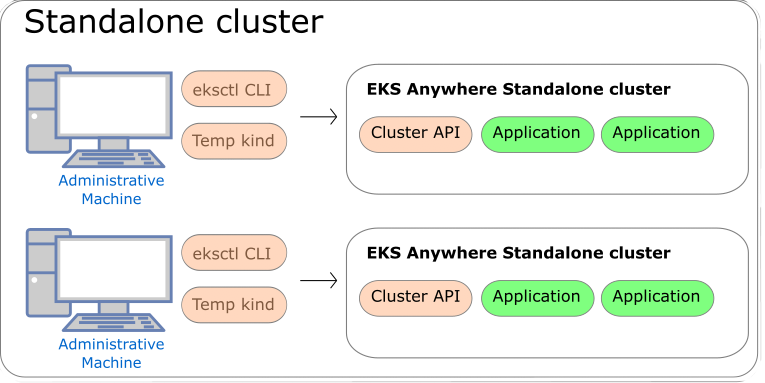

-

Standalone clusters: If you are only running a single EKS Anywhere cluster, you can deploy a standalone cluster. This deployment type runs the EKS Anywhere management components on the same cluster that runs workloads. Standalone clusters must be managed with the

eksctlCLI. A standalone cluster is effectively a management cluster, but in this deployment type, only manages itself. -

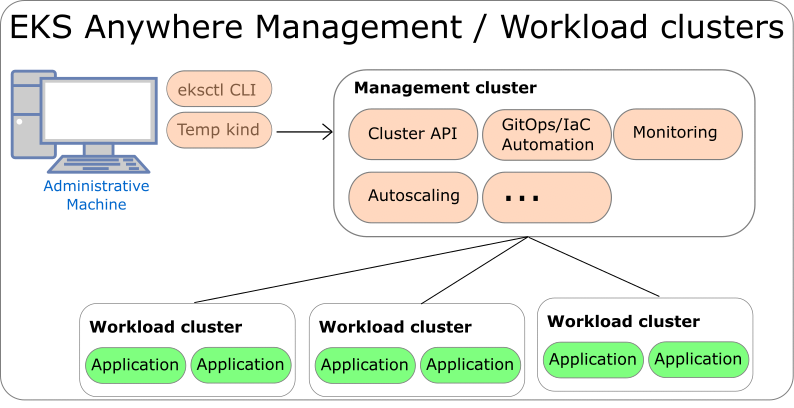

Management cluster with separate workload clusters: If you plan to deploy multiple EKS Anywhere clusters, it’s recommended to deploy a management cluster with separate workload clusters. With this deployment type, the EKS Anywhere management components are only run on the management cluster, and the management cluster can be used to perform cluster lifecycle operations on a fleet of workload clusters. The management cluster must be managed with the

eksctlCLI, whereas workload clusters can be managed with theeksctlCLI or with Kubernetes API-compatible clients such askubectl, GitOps, or Terraform.

If you use the management cluster architecture, the management cluster must run on the same infrastructure provider as your workload clusters. For example, if you run your management cluster on vSphere, your workload clusters must also run on vSphere. If you run your management cluster on bare metal, your workload cluster must run on bare metal. Similarly, all nodes in workload clusters must run on the same infrastructure provider. You cannot have control plane nodes on vSphere, and worker nodes on bare metal.

Both deployment architectures can run entirely disconnected from the internet and AWS Cloud. For information on deploying EKS Anywhere in airgapped environments, reference the Airgapped Installation page.

Standalone Clusters

Technically, standalone clusters are the same as management clusters, with the only difference being that standalone clusters are only capable of managing themselves. Regardless of the deployment architecture you choose, you always start by creating a standalone cluster from an Admin machine. When you first create a standalone cluster, a temporary Kind bootstrap cluster is used on your Admin machine to pull down the required components and bootstrap your standalone cluster on the infrastructure of your choice.

Management Clusters

Management clusters are long-lived EKS Anywhere clusters that can create and manage a fleet of EKS Anywhere workload clusters. Management clusters run both management and cluster components. Workload clusters run cluster components only and are where your applications run. Management clusters enable you to centrally manage your workload clusters with Kubernetes API-compatible clients such as kubectl, GitOps, or Terraform, and prevent management components from interfering with the resource usage of your applications running on workload clusters.

2 - EKS Anywhere and Kubernetes version lifecycle

This page contains information on the EKS Anywhere release cycle and support for Kubernetes versions.

Each EKS Anywhere version contains at least four Kubernetes versions. When creating new clusters, we recommend that you use the latest available Kubernetes version supported by EKS Anywhere. However, you can create new EKS Anywhere clusters with any Kubernetes version that the EKS Anywhere version supports. To create new clusters or upgrade existing clusters with a Kubernetes version under extended support, you must have a valid and unexpired license token for the cluster you are creating or upgrading.

EKS Anywhere versions

Each EKS Anywhere version includes all components required to create and manage EKS Anywhere clusters. This includes but is not limited to:

- Administrative / CLI components (eksctl CLI, image-builder, diagnostics-collector)

- Management components (Cluster API controller, EKS Anywhere controller, provider-specific controllers)

- Cluster components (Kubernetes, Cilium)

You can find details about each EKS Anywhere release in the EKS Anywhere release manifest

. The release manifest contains references to the corresponding bundle manifest for each EKS Anywhere version. Within the bundle manifest, you will find the components included in a specific EKS Anywhere version. The images running in your deployment use the same URI values specified in the bundle manifest for that component. For example, see the bundle manifest

for EKS Anywhere version v0.21.7.

EKS Anywhere follows a 4-month release cadence for minor versions and a 2-week cadence for patch versions. Common vulnerabilities and exposures (CVE) patches and bug fixes, including those for the supported Kubernetes versions, are included in the latest EKS Anywhere minor version (version N). High and critical CVE patches and bug fixes are also backported to the penultimate EKS Anywhere minor version (version N-1), which follows a monthly patch release cadence.

Reference the table below for release dates and patch support for each EKS Anywhere version. This table shows the Kubernetes versions that are supported in each EKS Anywhere version.

| EKS Anywhere Version | Supported Kubernetes Versions | Release Date | Receiving Patches |

|---|---|---|---|

| 0.25 | 1.35, 1.34, 1.33, 1.32, 1.31, 1.30, 1.29 | March 1, 2026 | Yes |

| 0.24 | 1.34, 1.33, 1.32, 1.31, 1.30, 1.29, 1.28 | November 6, 2025 | Yes |

| 0.23 | 1.33, 1.32, 1.31, 1.30, 1.29, 1.28 | June 30, 2025 | No |

| 0.22 | 1.32, 1.31, 1.30, 1.29, 1.28 | February 28, 2025 | No |

| 0.21 | 1.31, 1.30, 1.29, 1.28, 1.27 | October 30, 2024 | No |

| 0.20 | 1.30, 1.29, 1.28, 1.27, 1.26 | June 28, 2024 | No |

| 0.19 | 1.29, 1.28, 1.27, 1.26, 1.25 | February 29, 2024 | No |

| 0.18 | 1.28, 1.27, 1.26, 1.25, 1.24 | October 30, 2023 | No |

| 0.17 | 1.27, 1.26, 1.25, 1.24, 1.23 | August 16, 2023 | No |

| 0.16 | 1.27, 1.26, 1.25, 1.24, 1.23 | June 1, 2023 | No |

| 0.15 | 1.26, 1.25, 1.24, 1.23, 1.22 | March 30, 2023 | No |

| 0.14 | 1.25, 1.24, 1.23, 1.22, 1.21 | January 19, 2023 | No |

- Older EKS Anywhere versions are omitted from this table for brevity, reference the EKS Anywhere GitHub for older versions.

Kubernetes versions

The release and support schedule for Kubernetes versions in EKS Anywhere aligns with the Amazon EKS release and support schedule, including both standard and extended support for Kubernetes versions, as documented on the Amazon EKS Kubernetes release calendar.

A Kubernetes version is under standard support in EKS Anywhere for 14 months after its release in EKS Anywhere. Extended support for Kubernetes versions was released in EKS Anywhere version v0.22.0 and adds an additional 12 months of CVE patches and critical bug fixes for the Kubernetes version. To use Kubernetes versions under extended support with EKS Anywhere clusters, you must have a valid and unexpired license token for each cluster you will create or upgrade with extended support Kubernetes versions. The patch releases for Kubernetes versions are included in EKS Anywhere according to the EKS Anywhere version release schedule defined in the previous section.

Unlike Amazon EKS in the cloud, there are no automatic upgrades in EKS Anywhere and you have full control over when you upgrade. On the end of the extended support date, you can still create new EKS Anywhere clusters with that Kubernetes version if the EKS Anywhere version includes it. Any existing EKS Anywhere clusters with the unsupported Kubernetes version continue to function. As new Kubernetes versions become available in EKS Anywhere, we recommend that you proactively update your clusters to use the latest available Kubernetes version to remain on versions that receive CVE patches and bug fixes. You can continue to get troubleshooting support for your EKS Anywhere clusters running on unsupported Kubernetes versions if you have an EKS Anywhere Enterprise Subscription. However, if there is an issue that requires code changes, the only resolution is to upgrade as patches are no longer made available for Kubernetes versions that have exited extended support.

Reference the table below for release and support dates for each Kubernetes version in EKS Anywhere. The Release Date column denotes the EKS Anywhere release date when the Kubernetes version was first supported in EKS Anywhere.

| Kubernetes Version | Release Date | End of standard support | End of extended support |

|---|---|---|---|

| 1.35 | March 1, 2026 | April 30, 2027 | April 30, 2028 |

| 1.34 | November 6, 2025 | December 31, 2026 | December 31, 2027 |

| 1.33 | June 30, 2025 | August 31, 2026 | August 31, 2027 |

| 1.32 | February 28, 2025 | April 30, 2026 | April 30, 2027 |

| 1.31 | October 30, 2024 | December 31, 2025 | December 31, 2026 |

| 1.30 | June 28, 2024 | August 31, 2025 | August 31, 2026 |

| 1.29 | February 29, 2024 | April 30, 2025 | April 30, 2026 |

| 1.28 | October 30, 2023 | December 31, 2024 | December 31, 2025 |

| 1.27 | June 1, 2023 | February 28, 2025 | |

| 1.26 | March 30, 2023 | October 30, 2024 | |

| 1.25 | January 19, 2023 | June 28, 2024 |

- Older Kubernetes versions are omitted from this table for brevity, reference the EKS Anywhere GitHub for older versions.

Operating System versions

Bottlerocket, Ubuntu, and Red Hat Enterprise Linux (RHEL) can be used as operating systems for nodes in EKS Anywhere clusters. Reference the table below for operating system version support in EKS Anywhere. For information on operating system management in EKS Anywhere, reference the Operating System Management Overview page

| OS | OS Versions | EKS Anywhere version |

|---|---|---|

| Ubuntu | 24.04 | 0.24 |

| 22.04 | 0.17 | |

| 20.04 | 0.5 | |

| Bottlerocket | 1.54.0 | 0.25 |

| 1.50.0 | 0.24 | |

| 1.48.0 | 0.23 | |

| 1.26.1 | 0.21 | |

| 1.20.0 | 0.20 | |

| 1.19.1 | 0.19 | |

| 1.15.1 | 0.18 | |

| RHEL | 9.x* | 0.18 |

| 8.x | 0.12 |

*Bare Metal, CloudStack and Nutanix only

- For details on supported operating systems for Admin machines, see the Admin Machine page.

- Older Bottlerocket versions are omitted from this table for brevity

Frequently Asked Questions (FAQs)

1. Where can I find details of the changes included in an EKS Anywhere version?

For changes included in an EKS Anywhere version, reference the EKS Anywhere Changelog.

2. How can I be notified of new EKS Anywhere version releases?

To configure notifications for new EKS Anywhere versions, follow the instructions on the Release Alerts page.

3. Does EKS Anywhere have extended support for Kubernetes versions?

Yes. Extended support for Kubernetes versions was released in EKS Anywhere version v0.22.0 and adds an additional 12 months of CVE patches and critical bug fixes for the Kubernetes version. To use Kubernetes versions under extended support with EKS Anywhere clusters, you must have an EKS Anywhere Enterprise Subscription, and a valid and unexpired license token for each cluster you will create or upgrade with extended support Kubernetes versions. You must use EKS Anywhere version v0.22.0 or above to use extended support for Kubernetes versions.

4. What happens on the end of support date for a Kubernetes version?

Unlike Amazon EKS in the cloud, there are no forced upgrades with EKS Anywhere. On the end of support date, you can still create new EKS Anywhere clusters with the unsupported Kubernetes version if the EKS Anywhere version includes it. Existing EKS Anywhere clusters with unsupported Kubernetes versions continue to function. However, you will not be able to receive CVE patches or bug fixes for unsupported Kubernetes versions. Troubleshooting support, configuration guidance, and upgrade assistance is available for all Kubernetes and EKS Anywhere versions for customers with EKS Anywhere Enterprise Subscriptions.

5. What EKS Anywhere versions are supported if you have the EKS Anywhere Enterprise Subscription?

If you have purchased an EKS Anywhere Enterprise Subscription, AWS will provide troubleshooting support, configuration guidance, and upgrade assistance for your licensed clusters, irrespective of the EKS Anywhere version it’s running on. However, as the CVE patches and bug fixes are only included in the latest and penultimate EKS Anywhere versions, it is recommended to use either of these releases to manage your deployments and keep them up to date. With an EKS Anywhere Enterprise Subscription, AWS will assist you in upgrading your licensed clusters to the latest EKS Anywhere version.

6. Can I use different EKS Anywhere versions for my management cluster and workload clusters?

Yes, the management cluster can be upgraded to newer EKS Anywhere versions than the workload clusters that it manages. However, a maximum skew of one EKS Anywhere minor version for management and workload clusters. This means the management cluster can be at most one EKS Anywhere minor version newer than the workload clusters (ie. management cluster with v0.22.x and workload clusters with v0.21.x). In the event that you want to upgrade your management cluster to a version that does not satisfy this condition, we recommend upgrading the workload cluster’s EKS Anywhere version first to match the current management cluster’s EKS Anywhere version, followed by an upgrade to your desired EKS Anywhere version for the management cluster.

NOTE: Workload clusters can only be created with or upgraded to the same EKS Anywhere version that the management cluster was created with. For example, if you create your management cluster with

v0.22.0, you can only create workload clusters withv0.22.0. However, if you create your management cluster with versionv0.21.0and then upgrade tov0.22.0, you can create workload clusters with either EKS Anywhere versionv0.21.0orv0.22.0.

7. Can I skip EKS Anywhere minor versions during upgrades (such as going from v0.20.x directly to v0.22.x)?

No, it is not recommended to skip EKS Anywhere versions during upgrade. Regular upgrade reliability testing is only performed for sequential version upgrades (ie. going from version 0.20.x to 0.21.x, then from version 0.21.x to 0.22.x). However, you can choose to skip EKS Anywhere patch versions (ie. upgrade from v0.21.3 to v0.21.5).

8. What is the difference between an EKS Anywhere minor version versus a patch version?

An EKS Anywhere minor version includes new EKS Anywhere capabilities, bug fixes, security patches, and new Kubernetes minor versions if they are available. An EKS Anywhere patch version generally includes only bug fixes, security patches, and Kubernetes patch version increments. EKS Anywhere patch versions are released more frequently than EKS Anywhere minor versions so you can receive the latest security and bug fixes sooner. For example, patch releases for the latest EKS Anywhere version follow a biweekly release cadence and patches for the penultimate EKS Anywhere version follow a monthly cadence.

9. What kind of fixes are patched in the latest EKS Anywhere minor version?

The latest EKS Anywhere minor version will receive CVE patches and bug fixes for EKS Anywhere components and the Kubernetes versions that are supported by the corresponding EKS Anywhere version. New curated packages versions, if any, will be made available as upgrades for this minor version.

10. What kind of fixes are patched in the penultimate EKS Anywhere minor version?

The penultimate EKS Anywhere minor version receives high and critical CVE patches and updates only to those Kubernetes versions that are supported by the corresponding EKS Anywhere version. New curated packages versions, if any, will be made available as upgrades for this minor version.

11. Can I get notified when support is ending for a Kubernetes version on EKS Anywhere?

Not automatically. You should check this page regularly and take note of the end of support date for the Kubernetes version you’re using and plan your upgrades accordingly.

3 - Support for EKS Anywhere

EKS Anywhere is available as open source software that you can run on hardware in your data center or edge environment.

You can purchase EKS Anywhere Enterprise Subscriptions to receive support for your EKS Anywhere clusters and for access to EKS Anywhere Curated Packages and extended support for Kubernetes versions . You can only receive support for your EKS Anywhere clusters that are licensed under an active EKS Anywhere Enterprise Subscription. EKS Anywhere Enterprise Subscriptions are available for a 1-year or 3-year term, and are priced on a per cluster basis.

You must have an EKS Anywhere Enterprise Subscription to access EKS Anywhere Curated Packages and EKS Anywhere extended support for Kubernetes versions.

EKS Anywhere Enterprise Subscriptions include support for the following components.

- EKS Distro (see documentation for components)

- EKS Anywhere core components such as the Cilium CNI, Flux GitOps controller, kube-vip, EKS Anywhere CLI, EKS Anywhere controllers, image builder, and EKS Connector

- EKS Anywhere cluster lifecycle operations such as creating, scaling, and upgrading

- EKS Anywhere troubleshooting, general guidance, and best practices

- EKS Anywhere Curated Packages (see Overview of curated packages for more information)

- EKS Anywhere extended support for Kubernetes versions (see Version lifecycle for more information)

- Bottlerocket node operating system

See the following links for more information on EKS Anywhere Enterprise Subscriptions

- EKS Anywhere Pricing Page

- EKS Anywhere FAQ Page

- Steps to purchase a subscription

- Steps to license your cluster

- Steps to share curated packages with another account

If you are using EKS Anywhere and have not purchased a subscription, you can file an issue in the EKS Anywhere GitHub Repository, and someone will get back to you as soon as possible. If you discover a potential security issue in this project, we ask that you notify AWS/Amazon Security via the vulnerability reporting page. Please do not create a public GitHub issue for security problems.

Frequently Asked Questions (FAQs)

1. How much does an EKS Anywhere Enterprise Subscription cost?

For pricing information, visit the EKS Anywhere Pricing page.

2. How can I purchase an EKS Anywhere Enterprise Subscription?

Reference the Purchase Subscriptions documentation for instructions on how to purchase.

3. Are subscriptions I previously purchased manually integrated into the EKS console?

No, EKS Anywhere Enterprise Subscriptions purchased manually before October 2023 cannot be viewed or managed through the EKS console, APIs, and AWS CLI.

4. Can I cancel my subscription in the EKS console, APIs, and AWS CLI?

You can cancel your subscription within the first 7 days of purchase by filing an AWS Support case. When you cancel your subscription within the first 7 days, you are not charged for the subscription. To cancel your subscription outside of the 7-day time period, contact your AWS account team.

5. Can I cancel my subscription after I use it to file an AWS Support case?

No, if you have used your subscription to file an AWS Support case requesting EKS Anywhere support, then we are unable to cancel the subscription or refund the purchase regardless of the 7-day grace period, since you have leveraged support as part of the subscription.

6. In which AWS Regions can I purchase subscriptions?

You can purchase subscriptions in all AWS Regions , except the Asia Pacific (Thailand), Mexico (Central), AWS GovCloud (US) Regions, and the China Regions.

7. Can I renew my subscription through the EKS console, APIs, and AWS CLI?

Yes, you can configure auto renewal during subscription creation or at any time during your subscription term. When auto renewal is enabled for your subscription, the subscription and associated licenses will be automatically renewed for the term of the existing subscription (1-year or 3-years). The 7-day cancellation period does not apply to renewals. You do not need to reapply licenses to your EKS Anywhere clusters when subscriptions are automatically renewed.

8. Can I edit my subscription through the EKS console, APIs, and AWS CLI?

You can edit the auto renewal and tags configurations for your subscription with the EKS console, APIs, and AWS CLI. To change the term or license quantity for a subscription, you must create a new subscription.

9. What happens when a subscription expires?

When subscriptions expire, licenses associated with the subscription can no longer be used for new support cases, access to EKS Anywhere Curated Packages is revoked, clusters cannot be created or upgraded with Kubernetes versions under extended support, and billing for the subscription stops. Support cases created during the active subscription period will continue to be serviced. You will receive emails 3 months, 1 month, and 1 week before subscriptions expire, and an alert is presented in the EKS console for approaching expiration dates. Subscriptions can be viewed with the EKS console, APIs, and AWS CLI after expiration.

10. How do I use the licenses for my subscription?

When you create a subscription, licenses are created based on the quantity you pass when you create the subscription and the licenses can be viewed in the EKS console. There are two key parts of the license, the license ID string and the license token. In EKS Anywhere versions v0.21.x and below, the license ID string was applied as a Kubernetes Secret to EKS Anywhere clusters and used when support cases are created to validate the cluster is eligible for support. The license token was introduced in EKS Anywhere version v0.22.0 and all existing EKS Anywhere subscriptions have been updated with a license token for each license.

You can use either the license ID string or the license token when you create AWS Support cases for your EKS Anywhere clusters. To use extended support for Kubernetes versions in EKS Anywhere, available for EKS Anywhere version v0.22.0 and above, your clusters must have a valid and unexpired license token to be able to create and upgrade clusters using the Kubernetes extended support versions.

11. How do I apply licenses to my EKS Anywhere clusters?

Reference the License cluster documentation for instructions on how to apply licenses your EKS Anywhere clusters.

12. Can I share licenses with other AWS accounts?

The licenses created for your subscriptions cannot be shared with AWS Resource Access Manager (RAM) but they can be shared through your own manual, secure mechanisms. It is generally recommended to create the subscription with the AWS account that will be used to operate the EKS Anywhere clusters.

13. Can I share access to curated packages with other AWS accounts?

Yes, reference the Share curated packages access documentation for instructions on how to share access to curated packages with other AWS accounts in your organization.

14. Is there an option to pay for subscriptions upfront?

If you need to pay upfront for subscriptions, please contact your AWS account team.

15. Is there a free trial for EKS Anywhere Enterprise Subscriptions?

Free trial access to EKS Anywhere Curated Packages is available upon request. Free trial access to EKS Anywhere Curated Packages does not include troubleshooting support for your EKS Anywhere clusters or access to extended support for Kubernetes versions. Contact your AWS account team for more information.

4 - EKS Anywhere Curated Packages

Note

EKS Anywhere Curated Packages are only available to customers with EKS Anywhere Enterprise Subscriptions. To request evaluation access to EKS Anywhere Curated Packages, talk to your AWS account team or connect with one here.Overview

EKS Anywhere Curated Packages are Amazon-curated software packages that extend the core functionalities of Kubernetes on your EKS Anywhere clusters. If you operate EKS Anywhere clusters on-premises, you probably install additional software to ensure the security and reliability of your clusters. However, you may be spending a lot of effort searching for the right software, tracking updates, and testing them for compatibility. Now with the EKS Anywhere Curated Packages, you can rely on Amazon to provide trusted, up-to-date, and compatible software packages that are supported by Amazon, reducing the need for multiple vendor support agreements.

- Amazon-built: All container images of the packages are built from source code by Amazon, including the open source (OSS) packages. OSS package images are built from the open source upstream.

- Amazon-scanned: Amazon scans the container images including the OSS package images daily for security vulnerabilities and provides remediation.

- Amazon-signed: Amazon signs the package bundle manifest (a Kubernetes manifest) for the list of curated packages. The manifest is signed with AWS Key Management Service (AWS KMS) managed private keys. The curated packages are installed and managed by a package controller on the clusters. Amazon provides validation of signatures through an admission control webhook in the package controller and the public keys distributed in the bundle manifest file.

- Amazon-tested: Amazon tests the compatibility of all curated packages including the OSS packages with each new version of EKS Anywhere.

- Amazon-supported: All curated packages, including the curated OSS packages, are supported under EKS Anywhere Enterprise Subscriptions.

The main components of EKS Anywhere Curated Packages are the package controller , the package build artifacts and the command line interface . The package controller will run in a pod in an EKS Anywhere cluster. The package controller will manage the lifecycle of all curated packages.

Curated package list

| Name | Description | Versions | GitHub |

|---|---|---|---|

| ADOT | ADOT Collector is an AWS distribution of the OpenTelemetry Collector, which provides a vendor-agnostic solution to receive, process and export telemetry data. | v0.45.1 | https://github.com/aws-observability/aws-otel-collector |

| Cert-manager | Cert-manager is a certificate manager for Kubernetes clusters. | v1.19.3 | https://github.com/cert-manager/cert-manager |

| Cluster Autoscaler | Cluster Autoscaler is a component that automatically adjusts the size of a Kubernetes Cluster so that all pods have a place to run and there are no unneeded nodes. | v9.55.0 | https://github.com/kubernetes/autoscaler |

| Emissary Ingress | Emissary Ingress is an open source Ingress supporting API Gateway + Layer 7 load balancer built on Envoy Proxy. |

v3.10.0 | https://github.com/emissary-ingress/emissary/ |

| Harbor | Harbor is an open source trusted cloud native registry project that stores, signs, and scans content. | v2.14.2 | https://github.com/goharbor/harbor https://github.com/goharbor/harbor-helm |

| MetalLB | MetalLB is a virtual IP provider for services of type LoadBalancer supporting ARP and BGP. |

v0.15.2 | https://github.com/metallb/metallb/ |

| Metrics Server | Metrics Server is a scalable, efficient source of container resource metrics for Kubernetes built-in autoscaling pipelines. | v3.13.0 | https://github.com/kubernetes-sigs/metrics-server |

| Prometheus | Prometheus is an open-source systems monitoring and alerting toolkit that collects and stores metrics as time series data. | v3.8.0 | https://github.com/prometheus/prometheus |

FAQ

1. Can I use other software that isn’t in the list of curated packages?

Yes. You can use your choice of optional software with EKS Anywhere. However, if the software you use is not included in the list of components covered by EKS Anywhere Enterprise subscriptions, then you cannot get support for that software through AWS Support. Amazon does not provide testing, security patching, software updates, or customer support for the self-managed software you run on EKS Anywhere clusters.

2. Can I install software that’s on the curated package list but not sourced from the EKS Anywhere repository?

Yes, you do not have to use the specific software included in EKS Anywhere Curated Packages. However, if for example, you deploy a Harbor image that is not built and signed by Amazon, Amazon will not provide testing or customer support to your self-built images.

5 - Compare EKS Anywhere and Amazon EKS

EKS Anywhere provides an installable software package for creating and operating Kubernetes clusters on-premises and automation tooling for cluster lifecycle operations. EKS Anywhere is a fit for isolated and air-gapped environments, and for users who prefer to manage their own Kubernetes clusters on-premises.

Amazon Elastic Kubernetes Service (Amazon EKS) is a managed Kubernetes service where the Kubernetes control plane is managed by AWS, and users can choose to run applications on EKS Auto Mode, AWS Fargate, EC2 instances in AWS Regions, AWS Local Zones, or AWS Outposts, or on customer-managed infrastructure with EKS Hybrid Nodes. If you have on-premises or edge environments with reliable connectivity to an AWS Region, consider using EKS Hybrid Nodes or EKS on Outposts to benefit from the AWS-managed EKS control plane and consistent experience with EKS in AWS Cloud.

EKS Anywhere and Amazon EKS are certified Kubernetes conformant, so existing applications that run on upstream Kubernetes are compatible with EKS Anywhere and Amazon EKS.

Comparing EKS Anywhere to EKS on Outposts

Like EKS Anywhere, EKS on Outposts enables customers to run Kubernetes workloads on-premises and at the edge.

The main differences are:

- EKS on Outposts requires a reliable connection to AWS Regions. EKS Anywhere can run in isolated or air-gapped environments.

- With EKS on Outposts, AWS provides AWS-managed infrastructure and AWS services such as Amazon EC2 for compute, Amazon VPC for networking, and Amazon EBS for storage. EKS Anywhere runs on customer-managed infrastructure and interfaces with customer-provided compute (vSphere, bare metal, Nutanix, etc.), networking, and storage.

- With EKS on Outposts, the Kubernetes control plane is managed by AWS. With EKS Anywhere, customers are responsible for managing the Kubernetes cluster lifecycle with EKS Anywhere automation tooling.

- Customers can use EKS on Outposts with the same console, APIs, and tools they use to run EKS clusters in AWS Cloud. With EKS Anywhere, customers can use the eksctl CLI or Kubernetes API-compatible tooling to manage their clusters.

For more information, see the EKS on Outposts documentation.

Comparing EKS Anywhere to EKS Hybrid Nodes

Like EKS Anywhere, EKS Hybrid Nodes enables customers to run Kubernetes workloads on-premises and at the edge. Both EKS Anywhere and EKS Hybrid Nodes run on customer-managed infrastructure (VMware vSphere, bare metal, Nutanix, etc.).

The main differences are:

- EKS Hybrid Nodes requires a reliable connection to AWS Regions. EKS Anywhere can run in isolated or air-gapped environments.

- With EKS Hybrid Nodes, the Kubernetes control plane is managed by AWS. With EKS Anywhere, customers are responsible for managing the Kubernetes cluster lifecycle with EKS Anywhere automation tooling.

- Customers can use EKS Hybrid Nodes with the same console, APIs, and tools they use to run EKS clusters in AWS Cloud. With EKS Anywhere, customers can use the eksctl CLI or Kubernetes API-compatible tooling to manage their clusters.

For more information, see the EKS Hybrid Nodes documentation.

6 -

-

Standalone clusters: If you are only running a single EKS Anywhere cluster, you can deploy a standalone cluster. This deployment type runs the EKS Anywhere management components on the same cluster that runs workloads. Standalone clusters must be managed with the

eksctlCLI. A standalone cluster is effectively a management cluster, but in this deployment type, only manages itself. -

Management cluster with separate workload clusters: If you plan to deploy multiple EKS Anywhere clusters, it’s recommended to deploy a management cluster with separate workload clusters. With this deployment type, the EKS Anywhere management components are only run on the management cluster, and the management cluster can be used to perform cluster lifecycle operations on a fleet of workload clusters. The management cluster must be managed with the

eksctlCLI, whereas workload clusters can be managed with theeksctlCLI or with Kubernetes API-compatible clients such askubectl, GitOps, or Terraform.